How Inbound SEO Generated 50+ Monthly Sales Conversations for a B2B Data SaaS [Case Study]

Executive Summary

From Inbound Activity to Defensible Sales Conversations

This case study summarizes inbound content work done for a B2B data management SaaS company operating in a complex, technical buying environment (for confidentiality, the client is anonymized as “Company X”).

The objective was not to grow traffic for its own sake, but to make inbound content commercially reliable — content that could influence sales conversations and withstand revenue scrutiny.

At the outset, inbound performance was small but healthy. Rankings improved and traffic grew, but the results were difficult to defend internally.

Sales questioned lead quality, leadership asked what inbound was influencing, and attribution was inconsistent. Inbound activity was visible, but its commercial impact was not legible.

The turning point was a decision to reframe success around a sales-legible metric: sales conversations.

Traffic and rankings remained important, but they became supporting indicators rather than the goal.

Over time, this shift produced:

Consistent inbound influence on sales conversations

Sustained traffic growth (from ~1,200 to 20,000 monthly visits)

Increased confidence in inbound as a reliable, defensible channel

This case does not suggest that SEO is fast, or that content alone drives revenue. It demonstrates a more practical outcome: Inbound becomes defensible when it is designed to influence sales conversations — not to accumulate traffic.

NOTE: For clarity, “sales conversations” refers to demo/consultation signups that progressed into active sales discussions.

Table of Contents:

Context & Problem

From inbound traffic to sales conversations

The commercial problem: Defensibility

What was broken: Where inbound failed under scrutiny

The decision shift: Reframing inbound around sales conversations

Opportunity Selection Logic

Selecting for buyer motion, not keyword volume

Identifying commercial keyword opportunities

Validating commercial JTBD keywords

Example: “Reduce s3 replication latency”

Outcome of the selection logic

Content Design Principles

Designing content sales could recognize

Resulting design outcome

Reporting & Outcomes

Measurement & reporting

Results

Key Takeaways & Implications

What made this defensible

When this approach is effective

Where it’s less effective

What this case actually demonstrates

Appendix: Measurement Artifacts

CONTEXT AND PROBLEMS

From Inbound Traffic to Sales Conversations

This case study describes how the Commercial Pipeline SEO Content System was applied to Company X — a B2B data management SaaS company selling into technical and operational buyers.

The software addressed complex data management and synchronization use cases (such as distributed collaboration on large datasets, VDI deployments, hybrid cloud storage, disaster recovery, and more).

The primary target audience was IT professionals — which included high-expertise audience segments (e.g., IT Administrators) and lower-expertise audience segments (e.g., IT Project Coordinators).

Buying decisions typically involved multiple stakeholders, long evaluation cycles, and detailed technical scrutiny.

Inbound content was expected to play a commercial role (not just generate awareness), including:

Support evaluation

Generate sales conversations

Help buyers self-educate before engaging with sales

The challenge was not whether inbound mattered, but whether it could become a reliable and defensible growth channel that survived revenue scrutiny.

NOTE: For clarity, “sales conversations” refers to demo/consultation signups that progressed into active sales discussions.

The Commercial Problem: Traffic Wasn’t the Issue. Defensibility Was.

Like many B2B SaaS teams, Company X was investing in content to support inbound growth.

But their content output was inconsistent. Though search visibility was improving and they were generating demo signups from a few pieces of content, they were unable to produce inbound content that influenced pipeline at scale.

Internally, inbound performance was difficult to defend.

Sales reported that inbound content delivered “low-quality leads.”

Leadership wanted tangible proof that content was influencing pipeline.

Attribution was inconsistent and often inconclusive.

Inbound activity existed, but its impact on sales conversations wasn’t always legible. As a result, content felt vulnerable — something that required explanation rather than something that could stand on its own.

The problem wasn’t that inbound “wasn’t working.” The problem was that it couldn’t always survive revenue-level scrutiny.

What Was Broken: Where Inbound Failed Under Scrutiny

The team’s underlying issue wasn’t content quality — it was commercial alignment.

Specifically:

Their keyword targeting skewed heavily toward informational topics with high monthly search volumes

Success of inbound efforts was measured primarily through traffic and rankings

Content wasn’t consistently mapped to buyer roles or evaluation moments

There was no clear line from content consumption to sales conversations

None of these choices were unreasonable in isolation. But together, they made inbound difficult to defend when commercial questions surfaced.

All of these choices were rooted in a common assumption — that more traffic naturally leads to more demos and customers — which ultimately made inbound difficult to defend under commercial scrutiny.

In short: Inbound performance existed — but it wasn’t structured around sales-legible outcomes.

The Decision Shift: Reframing Inbound Around Sales Conversations

The turning point wasn’t a new tactic. It was a realignment of how Company X’s content strategy was designed and measured.

Instead of optimizing content primarily for traffic growth, inbound was reframed around a different success metric: sales conversations.

Sales conversations were chosen deliberately because they were:

Legible to sales

Meaningful to leadership

Defensible under revenue scrutiny, without requiring attribution gymnastics

Unlike attribution models or revenue dashboards, sales conversations could be discussed credibly across marketing, sales, and leadership without requiring shared assumptions.

This decision changed what qualified as a “good” content opportunity.

High-volume keywords were deprioritized if they didn’t map to evaluation or decision intent. Content was selected and designed based on whether it could realistically influence a sales conversation — not whether it could generate sessions.

Traffic still mattered. Rankings still mattered.

But they became supporting indicators, not the goal. And their value was contextual — i.e., ranking for “the right keywords” and generating “the right traffic.”

Over time, this shift produced two outcomes:

Inbound content consistently influenced 50+ sales conversations per month

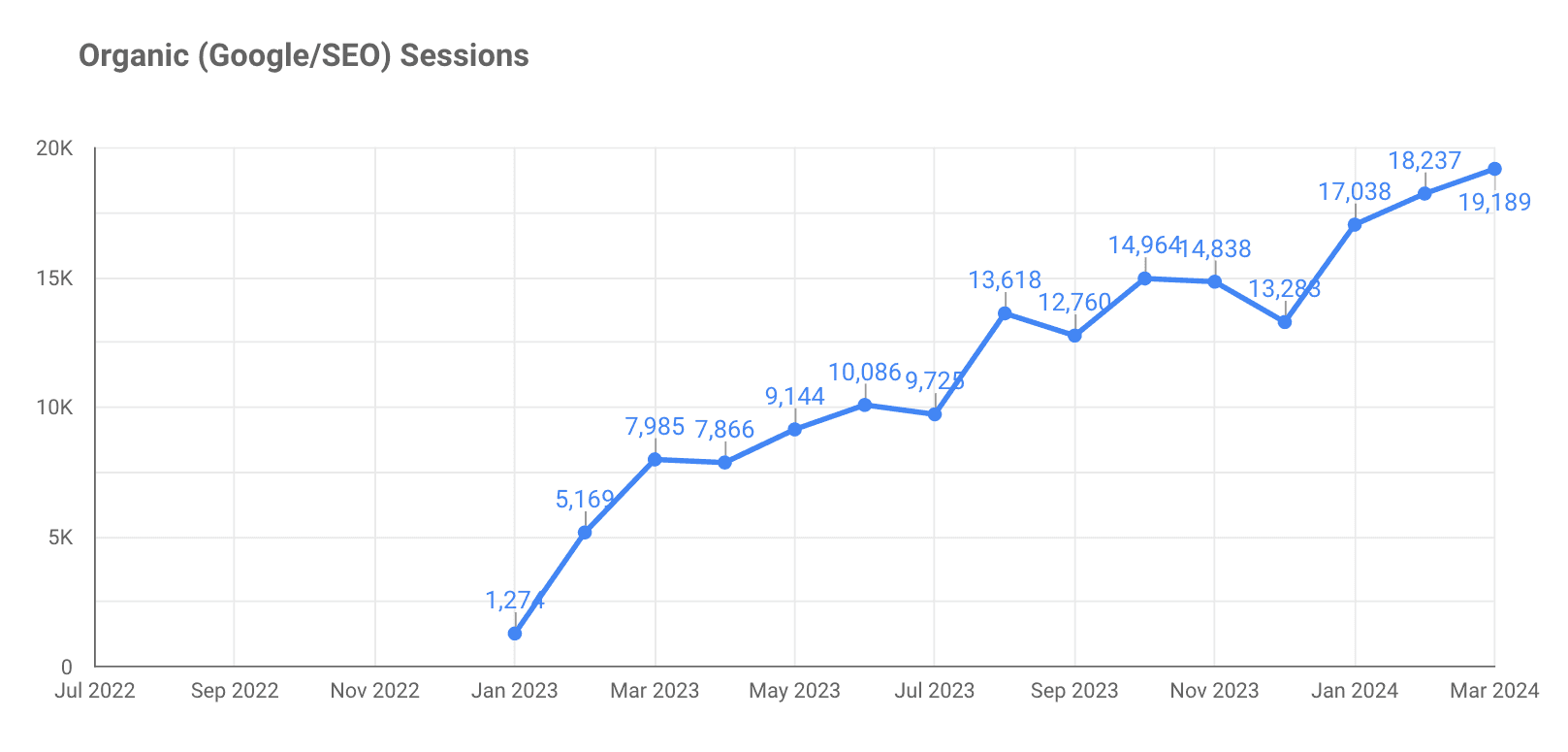

Organic traffic compounded significantly — growing from roughly 1,200 monthly visits to over 20,000 per month (≈1,500% increase) across the engagement

More importantly, inbound moved from being a channel that needed justification to a channel that could be discussed confidently in commercial terms.

OPPORTUNITY SELECTION LOGIC

Selecting for Buyer Motion, Not Keyword Volume

Once inbound success was reframed around sales conversations, the primary selection question changed from “Can we rank for this?” to “Would the right buyer search this while evaluating a decision?”

Opportunity selection focused on identifying evaluation-relevant queries — searches that indicated buyers were actively comparing options, validating approaches, or de-risking decisions.

Many keywords appeared attractive on paper but lacked commercial gravity. These were deprioritized in favor of opportunities defined by:

Specificity of the underlying problem

Proximity to an active buying decision

Clarity of the buyer role searching

The intent frame behind the query

Search volume was not used as a selection or performance metric.

If a query could plausibly influence a sales conversation — even at very low volume — it was considered viable.

In practice, many of the highest-performing keywords averaged fewer than 100 monthly searches. One of the earliest targets had approximately 20 monthly searches, yet remained among the strongest contributors to sales conversations over the course of the engagement.

Identifying Commercial Keyword Opportunities

This section is included to illustrate the decision logic behind opportunity selection — not as a prescriptive checklist.

Commercial opportunities were grouped into three categories:

Category Keywords: Queries indicating the searcher is looking for products in our category (e.g., “data replication software”).

Competitor/Alternative Keywords: Terms that indicate the searcher is looking for alternatives to a tool they’re already using or evaluating (e.g., “GoodSync alternatives”).

Job-To-Be-Done (JTBD) Keywords: Queries tied to executing a specific job related to Company X’s product (e.g., “reduce s3 replication latency”).

Category and competitor keywords were relatively straightforward to assess. But JTBD keywords required additional scrutiny.

Many JTBD queries were product-relevant but commercially inert. Relevance alone did not reliably indicate whether a query would influence a sales conversation.

To avoid investing in content that could not survive revenue scrutiny, JTBD opportunities were evaluated using a simple decision framework.

Validating Commercial JTBD Keywords

The following logic was used to assess whether a JTBD keyword had commercial potential. This framework is included to illustrate decision logic, not as a prescriptive checklist.

1. Is the job relevant to a buying decision?

For a JTBD query to be commercially viable, the job behind the search needed to map to a real buying decision — such as implementation, migration, evaluation, compliance, expansion, or failure recovery.

Queries that reflected execution steps within these contexts were more likely to surface during active evaluation.

2. Does the job carry meaningful risk?

Commercial JTBD searches are rarely driven by curiosity. They’re driven by risk reduction.

Greater financial, operational, regulatory, or career risk correlated strongly with commercial potential. When the cost of failure was high, searchers were more likely to seek guidance that informed vendor evaluation.

3. How much uncertainty exists behind the job?

Uncertainty amplifies perceived risk.

Two uncertainty patterns consistently correlated with commercial intent:

Compliance Uncertainty: Where mistakes carried regulatory, security, or governance consequences.

Variability Uncertainty: Where the correct solution depended heavily on context (e.g., the answer changed for large companies vs. small companies).

Queries exhibiting one or both patterns were more likely to influence evaluation-stage buyers.

Example: “Reduce S3 Replication Latency”

The JTBD query “reduce S3 replication latency” appeared informational on the surface, but consistently surfaced during evaluation-stage buying decisions.

The underlying job carried significant operational risk — particularly in environments where data availability, compliance, and workflow reliability were critical.

The query also exhibited high uncertainty. The appropriate solution varied based on factors such as dataset size, system architecture, regulatory constraints, and failure tolerance.

These characteristics made the keyword commercially viable despite low search volume. Once addressed, it consistently influenced sales conversations.

The framework validated this pattern repeatedly, allowing inbound effort to be concentrated on opportunities that could hold up under scrutiny.

Outcome of the Selection Logic

By applying this logic, inbound efforts were intentionally narrowed.

Noise was reduced early.

Content was concentrated on queries that plausibly influenced evaluation behavior.

Inbound performance became easier to explain — and easier to defend.

This selection discipline mattered more than scale. It ensured that content investment aligned with buyer motion rather than keyword volume, and that inbound contributions could be discussed confidently in commercial terms.

CONTENT DESIGN PRINCIPLES

Designing Content Sales Could Recognize

Once opportunities were selected, content design followed a single constraint: If an ICP came across this page during a buying decision, would it help them move forward?

Content was designed to support evaluation-stage considerations and reduce buyer uncertainty.

This required shifting away from generalized SEO patterns toward a more deliberate design posture.

Principle 1: Content must address decision moments

Each page was designed around a specific decision moment rather than a broad topic.

Instead of asking, “What information should this page include?”, the guiding question became, “What decision is the reader trying to make right now?”

This meant avoiding generic “best practices” content, and focusing instead on:

Discussing the specific use cases and pain points of the ICPs most likely to search each keyword

Tailoring content to the expertise-level of the target reader (e.g., IT Administrators vs IT Project Coordinators)

Deep discussions of how specific product features resolved specific pain-points

Content that aligned with an identifiable decision context — such as evaluation, comparison, implementation, or risk assessment — was more likely to surface during sales conversations.

Pages without a clear decision context were deprioritized.

Principle 2: Content must satisfy ICP Intent Frames

Each article was designed around the buyer’s Intent Frame — the decision context shaping what the reader was trying to do, what risks they were attempting to reduce, and what kind of answer would enable them to move forward confidently.

The objective was not to cover every possible angle, but to create commercially-relevant content that would enable buyers to proceed confidently.

Intent Frames were used to:

Create resonance by addressing the buyer’s lived experience

Instill confidence that the product could get buyers to their desired outcome within their specific constraints, while avoiding the negative outcomes they fear

Content that aligned with these frames consistently surfaced in sales conversations.

Principle 3: Risk-reduction and pain-points as organizing functions

Page structure was organized around risk reduction, pain-points, and outcomes — not product features.

Headers and subsections focused on:

Failure modes

Tradeoffs and constraints

Pain-points and success metrics

This mirrored how buyers evaluated options internally, and aligned more closely with how sales conversations unfolded.

Principle 4: Sales legibility as a design requirement

Content was designed to be recognizable and usable by sales.

This meant:

Avoiding abstract positioning language

Using terminology buyers already introduced in conversations

Structuring pages so key insights could be referenced or shared

That recognition mattered more than conversion optimization, particularly with regard to enabling sales to convert demos into customers.

Principle 5: Defensibility over optimization

Traditional SEO optimizations were applied selectively and only where they did not conflict with the primary goal.

Design decisions were evaluated by whether they increased the content’s ability to:

Hold up under internal scrutiny

Be explained to leadership

Support real buying conversations and decision moments

Help buyers arrive more informed — not prematurely converted

When optimization conflicted with clarity or credibility, it was deprioritized.

Resulting Design Outcome

The resulting content did not resemble conventional SEO articles.

Pages were more deliberate and less noisy — but significantly more useful in evaluation contexts.

Over time, this design discipline contributed to:

Increased alignment between sales and inbound content

More consistent use of content in deals

Improved confidence in inbound as a defensible commercial asset

REPORTING & OUTCOMES

Measurement and Reporting

Before reviewing outcomes, it’s important to clarify how success was measured.

Measurement was intentionally designed to support decision-making, not to maximize reporting depth.

Given the complexity of the buying cycle, no attempt was made to attribute revenue directly to individual pages. Instead, reporting focused on whether inbound content was showing up where sales and leadership expected it to matter.

The primary success signal was sales conversations — measured as demo/consultation signups that progressed into active sales discussions.

Supporting indicators included:

First-touch and assisted conversions tied to inbound content

Repeated appearance of specific pages in conversion paths

Sales references to inbound content during evaluation conversations

Traffic, rankings, and engagement metrics were monitored for diagnostic purposes only. They were not used as success criteria.

This approach reduced attribution debate and allowed inbound performance to be discussed calmly in commercial terms, even when outcomes varied by deal.

Where dashboards were used, they served as supporting artifacts, not decision drivers.

In addition to conversation-level signals, sales feedback was used to validate downstream relevance.

The sales team noted that, during the period reviewed, approximately one out of every nine demos progressed to become a customer.

This was discussed internally as a confirmation of lead quality, not as a performance benchmark or attribution claim.

Results

The outcomes below are ordered by commercial relevance, not visibility metrics.

1. Sales-legible inbound influence

Inbound content consistently influenced 50+ sales conversations per month.

Sales increasingly referenced specific inbound content during evaluation conversations, improving confidence in inbound’s role in the buying process.

2. Inbound shifted from fragile to defensible

Inbound moved from a “nice-to-have” channel to a reliable baseline under revenue review.

Content performance could be explained without attribution gymnastics, allowing marketing leaders to defend inbound decisions calmly and concretely in commercial terms.

3. Sustained organic growth as a secondary outcome

As a byproduct of focusing on evaluation-stage content, organic traffic compounded from approximately 1,200 monthly visits to over 20,000 (≈1,500% growth).

Traffic growth supported discovery, but it was not used as the primary indicator of success.

KEY TAKEAWAYS & IMPLICATIONS

What Made This Defensible

The results held up internally because they were structured around outcomes leadership already understood.

Specifically:

Success was measured by sales-legible signals, not abstract SEO metrics

Claims were bounded to influence, not attribution

Cause-and-effect logic was clear and explainable

No single dashboard or model was required to justify impact

Inbound no longer needed to be defended with theory.

It could be discussed using the same language as other revenue-adjacent efforts.

That shift reduced internal friction and increased confidence in content as a channel.

When This Approach is Effective

This approach works best in environments where:

Buying cycles are complex and research-heavy

Multiple stakeholders influence decisions

Buyers actively compare solutions before engaging sales

Content is expected to support evaluation, not just awareness

Where It’s Less Effective

It is not designed for:

Commodity products with minimal differentiation

Teams optimizing purely for brand reach or impressions

Industries/products where customers don’t do product research online (for example, I applied this method to a cloud broadcasting software company, but was unable to generate any lead conversions across the entire engagement)

Being explicit about these constraints was important to maintaining credibility.

What This Case Actually Demonstrates

This case does not argue that SEO is fast.

It doesn’t claim that content alone drives revenue (though it can for businesses with short, impulsive buying cycles). And it doesn’t suggest that traffic growth is meaningless.

What it demonstrates is simpler, and more practical:

Inbound becomes defensible when it is designed to influence sales conversations, not to accumulate traffic.

When content is selected, designed, and evaluated through that lens, it stops being an experiment that needs justification and starts behaving like a commercial asset that can stand on its own.

APPENDIX: MEASUREMENT ARTIFACTS

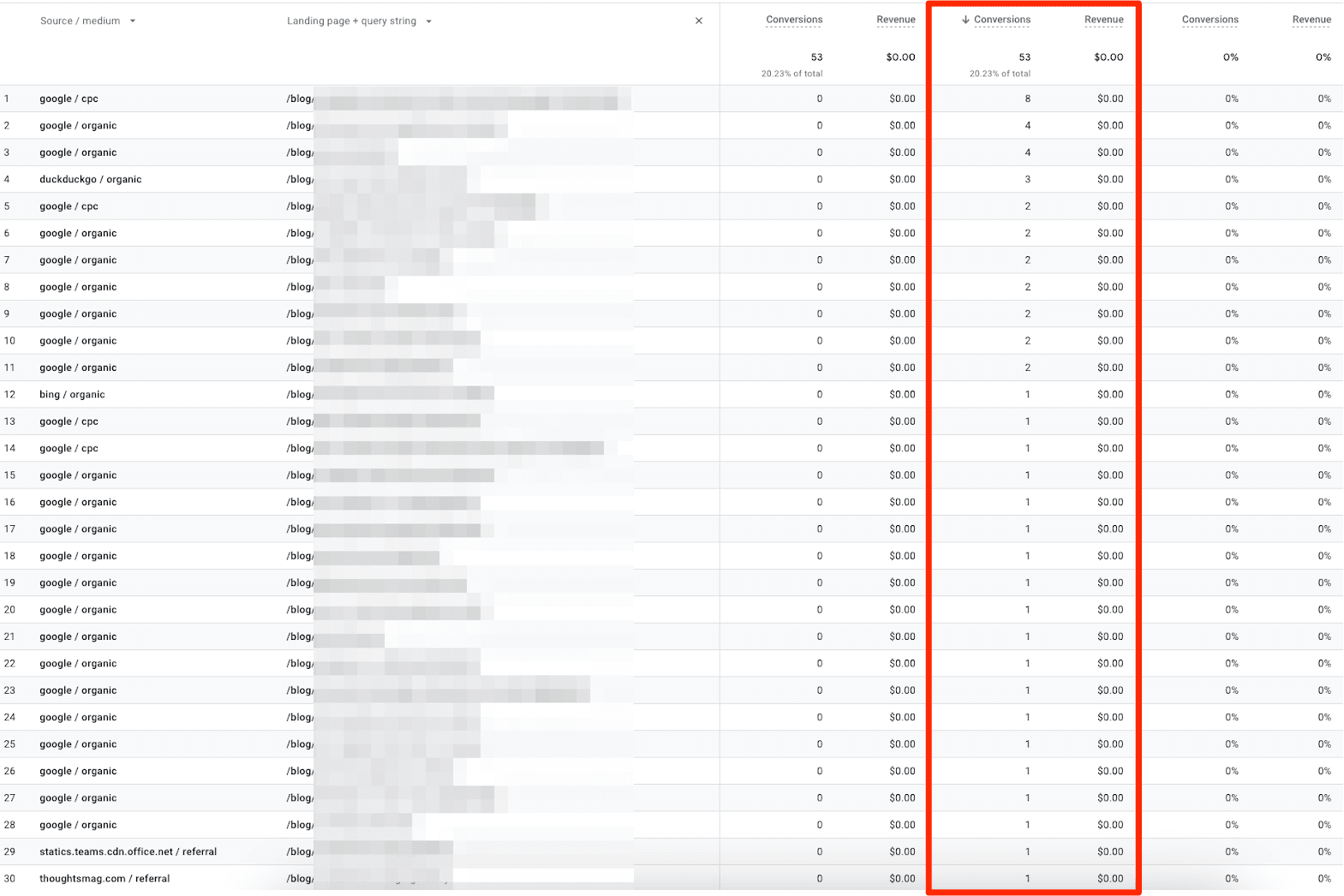

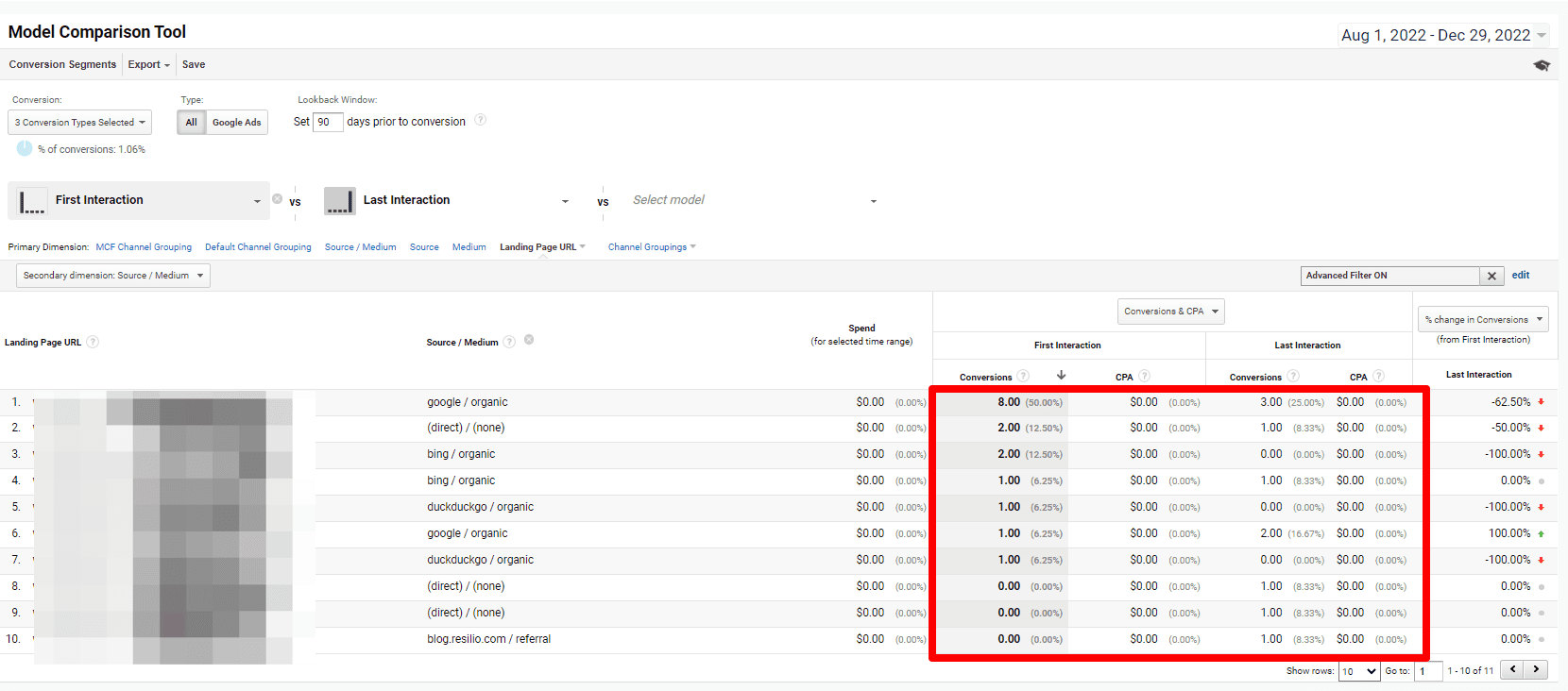

The following screenshots are included as illustrative artifacts, not as claims of direct revenue attribution.

They are provided to show how inbound content surfaced within conversion paths and sales-relevant interactions over time — particularly as first-touch or assisted interactions.

Given the complexity of the buying cycle, these artifacts were never used as standalone proof of performance.

They supported internal discussion alongside sales feedback and pipeline observation.

URLs and identifying details have been intentionally obscured to protect client information.

Inbound Content Appearing in Conversion Paths

Illustrative view of inbound content contributing to first-touch and assisted conversions over a 30-day lookback window (November 2023).

Early Inbound Traction on Conversion Paths

Illustrative view of inbound content contributing to first-touch and assisted conversions across first 5-months of engagement (August 2022 - December 2022).

Traffic Growth During the Engagement

Illustrative view of traffic growth across a 22-month period (engagement lasted July 2022 — May 2024).